Effects of packet/data sizes on various networks

I was thinking about peer-to-peer networking (in the context of Pettycoin, of course) and I wondered if sending ~1420 bytes of data is really any slower than sending 1 byte on real networks. Similarly, is it worth going to extremes to avoid crossing over into two TCP packets?

So I wrote a simple Linux TCP ping pong client and server: the client connects to the server then loops: reads until it gets a '1' byte, then it responds with a single byte ack. The server sends data ending in a 1 byte, then reads the response byte, printing out how long it took. First 1 byte of data, then 101 bytes, all the way to 9901 bytes. It does this 20 times, then closes the socket.

Here are the results on various networks (or download the source and result files for your own analysis):

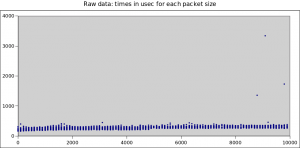

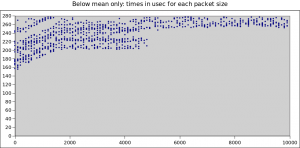

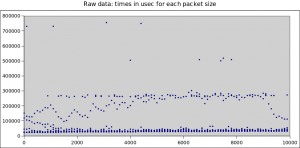

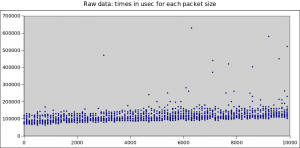

On Our Gigabit Lan

Interestingly, we do win for tiny packets, but there's no real penalty once we're over a packet (until we get to three packets worth):

[caption id="attachment_398" align="aligncenter" width="300"] Over the Gigabit Lan[/caption]

Over the Gigabit Lan[/caption]

[caption id="attachment_399" align="aligncenter" width="300"] Over Gigabit LAN (closeup)[/caption]

Over Gigabit LAN (closeup)[/caption]

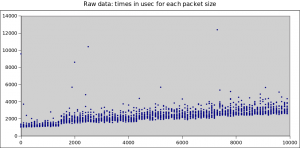

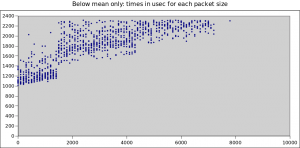

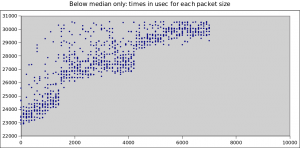

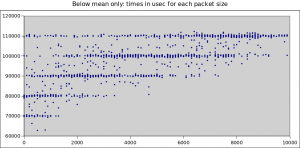

On Our Wireless Lan

Here we do see a significant decline as we enter the second packet, though extra bytes in the first packet aren't completely free:

[caption id="attachment_400" align="aligncenter" width="300"] Wireless LAN (all results)[/caption]

Wireless LAN (all results)[/caption]

[caption id="attachment_401" align="aligncenter" width="300"] Wireless LAN (closeup)[/caption]

Wireless LAN (closeup)[/caption]

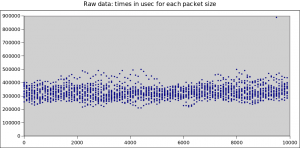

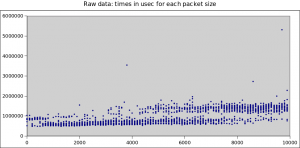

Via ADSL2 Over The Internet (Same Country)

Ignoring the occasional congestion from other uses of my home net connection, we see a big jump after the first packet, then another as we go from 3 to 4 packets:

[caption id="attachment_396" align="aligncenter" width="300"] ADSL over internet in same country[/caption]

ADSL over internet in same country[/caption]

[caption id="attachment_397" align="aligncenter" width="300"] ADSL over internet in same country (closeup)[/caption]

ADSL over internet in same country (closeup)[/caption]

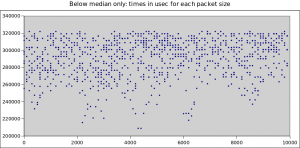

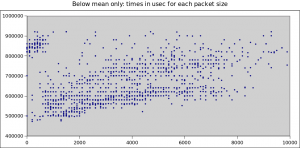

Via ADSL2 Over The Internet (Australia <-> USA)

Here, packet size is completely lost in the noise; the carrier pidgins don't even notice the extra weight:

[caption id="attachment_402" align="aligncenter" width="300"] Wifi + ADSL2 from Adelaide to US[/caption]

Wifi + ADSL2 from Adelaide to US[/caption]

[caption id="attachment_403" align="aligncenter" width="300"] Wifi + ADSL2 from Adelaide to US (closeup)[/caption]

Wifi + ADSL2 from Adelaide to US (closeup)[/caption]

Via 3G Cellular Network (HSPA)

I initially did this with Wifi tethering, but the results were weird enough that Joel wrote a little Java wrapper so I could run the test natively on the phone. It didn't change the resulting pattern much, but I don't know if this regularity of delay is a 3G or an Android thing. Here every packet costs, but you don't win a prize for having a short packet:

[caption id="attachment_394" align="aligncenter" width="300"] 3G network[/caption]

3G network[/caption]

[caption id="attachment_395" align="aligncenter" width="300"] 3G network (closeup)[/caption]

3G network (closeup)[/caption]

Via 2G Network (EDGE)

This one actually gives you a penalty for short packets! 800 bytes to 2100 bytes is the sweet-spot:

[caption id="attachment_405" align="aligncenter" width="300"] 2G (EDGE) network[/caption]

2G (EDGE) network[/caption]

[caption id="attachment_404" align="aligncenter" width="300"] 2G (EDGE) network (closeup)[/caption]

2G (EDGE) network (closeup)[/caption]

Summary

So if you're going to send one byte, what's the penalty for sending more? Eyeballing the minimum times from the graphs above:

| Wired LAN | Wireless | ADSL | 3G | 2G | |

|---|---|---|---|---|---|

| Penalty for filling packet | 30% | 15% | 5% | 0% | 0%* |

| Penalty for second packet | 30% | 40% | 15% | 20% | 0% |

| Penalty for fourth packet | 60% | 80% | 25% | 40% | 25% |

* Average for EDGE actually improves by about 35% if you fill packet